Reliability Is Managed In Services, But Felt In Transactions

Severin Neumann

February 19, 2026

Modern reliability practice is incredibly good at one thing: making complex systems operable. We break systems into services, assign owners, instrument the surfaces, and build crisp operational feedback loops. When something goes wrong, we can usually answer: which service is unhealthy, and which team should act? That’s what makes running large, distributed systems tractable in the first place. But product reliability asks a different question:

Did the user succeed?

That question often gets neglected. Not because teams don’t care, but because most reliability management is organized around services, while the user experiences transactions.

Reliability Is Felt in Transactions, Not Services

Consider an end-user experience like placing an order in an application. From the user’s perspective, this is one thing they are trying to accomplish. They add an item, confirm, and expect the order to go through. For them, the outcome is binary: the order worked, or it didn’t.

From a system perspective, that same experience fans out across many services. Inventory and pricing services check availability, payment authorization happens synchronously, a machine-learning-based fraud detection system evaluates the order in real time, and downstream fulfillment, recommendation updates, and notification steps continue asynchronously.

Each service might look “fine” when viewed in isolation. Latency could be slightly elevated in one place; retries could be masking failures in another, and asynchronous steps could be lagging, without any single service showing a clear red flag.

This is not a theoretical edge case. It’s a natural consequence of composing many independently reliable components into an end-to-end experience.

Reliability is managed at the service level, but it is felt at the level of transactions and flows.

Why Transaction-Level Reliability Doesn’t Collapse Into One Simple Metric

You can try to model this by defining reliability objectives for individual transactions, and in some cases that works well. A critical HTTP path on a frontend service can and should have a clear objective.

But systems rarely stop there. Real products are full of flows that are asynchronous, multi-step, or partially decoupled. Password reset workflows, AI-assisted report generation, onboarding sequences, background processing pipelines; these don’t fit neatly into a single request-response boundary.

In these cases, the user-facing “success” signal isn’t always available at the moment a request returns. You often only know whether the user truly succeeded after downstream work completes and state converges—sometimes minutes later, sometimes after multiple systems reconcile.

This is also where many SLO programs end up skewing toward internal service-level contracts: useful for ownership and operations, but not always capturing whether the user intent completed successfully end-to-end.

So the challenge isn’t “measure reliability.” It’s: measure reliability at the level where success is actually determined.

Product Ownership Doesn’t Map Cleanly to Services

There is another dimension where this becomes visible: products and features are rarely owned by a single service. They span multiple microservices, owned by different teams, evolving at different speeds. Developers and product managers tend to think in terms of features and user journeys, not in terms of which service is emitting which metric.

In a DevOps model, that creates friction. The people responsible for improving a product’s reliability need to understand how changes affect end-to-end behavior. Service-level views are necessary for fixing issues, but they are not sufficient for reasoning how reliable a feature or product feels to users over time.

And this is where a lot of reliability programs stall: they’re excellent at service health and incident response, but weaker at answering:

- Which user-facing flows are degrading?

- Which products are accumulating reliability risk?

- What is reliability trending like from the user’s perspective?

- Where are we “green” locally but failing end-to-end?

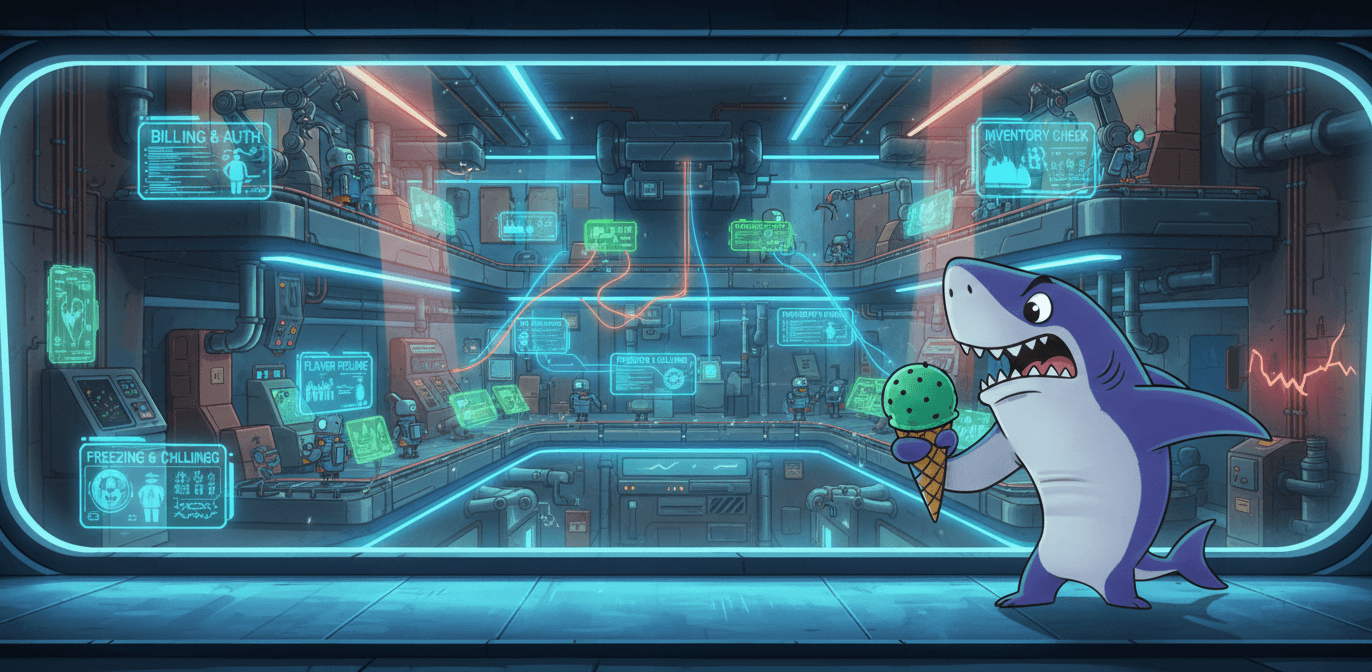

Consider a subscription upgrade flow initiated through a support chatbot. A customer messages: “Upgrade me to Pro”. The bot confirms success immediately.

Behind the scenes, that single interaction fans out across a chain of services, agents and actions: billing is updated, an entitlements agent validates the new plan and pushes updated permissions, usage limits refresh, and internal automation agents orchestrate background jobs reconcile state across systems.

No single service is down. The agents are executing as designed. Everything looks acceptable in isolation.

But minutes or hours later, the customer hits a limit they shouldn’t have, or a feature remains locked. Support tickets spike. Revenue recognition is delayed. Trust erodes.

From a service view, everything looks acceptable. From a product view, the upgrade flow is unreliable.

Closing the Gap Requires Product- and Flow-Centric Views

Addressing that gap requires additional lenses. Reliability systems need ways to look at signals through transaction-centric and product-centric views. Views that reflect how users experience the system and how teams build products across services, not only how services behave in isolation.

Services remain the building blocks. Service-level discipline remains essential. But to understand what users are actually feeling, and how reliable a product truly is, reliability needs to be observable at the level where experience happens: across transactions, flows, and products composed from many services working together.

We’ve been working on addressing this gap in our own product. We are exploring how reliability systems can make transaction- and product-level views first-class, while strengthening the service foundations teams rely on today. We’ll share more about that soon.

In the meantime, we’re curious whether this resonates: Does this match what you’re seeing in your own systems, or do you think service level reliability already cover more of this than we’ve described?

Let us know! We love to hear and read what you think: reach out to us via email or discuss with us on LinkedIn!